WordPress Sub-directory for SEO

-

Hi There,

I'm working on a WordPress site that includes a premium content blog with approx 900 posts.

As part of the project, those 900 posts and other membership functionality will be moved from the main site to another site built specifically for content/membership.

Ideally, we want the existing posts to remain on the root domain to avoid a loss in link juice/domain authority.

We initially began setting up a WordPress Multisite using the sub-directory option. This allows for the main site to be at www.website.com and the secondary site to be at www.website.com/secondary.

Unfortunately, the themes and plugins we need for the platform do not play nicely with WordPress Multisite, so we started seeking a new solution, and, discovered that a second instance of WordPress can be installed in a subdirectory on the server. This would give us the same subdirectory structure while bypassing WordPress Multisite and instead, having two separate single-site installs.

Do you foresee any issues with this WordPress subdirectory install? Does Google care/know these are two separate WordPress installs and do we risk losing any link juice/domain authority?

-

@himalayaninstitute said in WordPress Sub-directory for SEO:

WordPress can be installed in a subdirectory

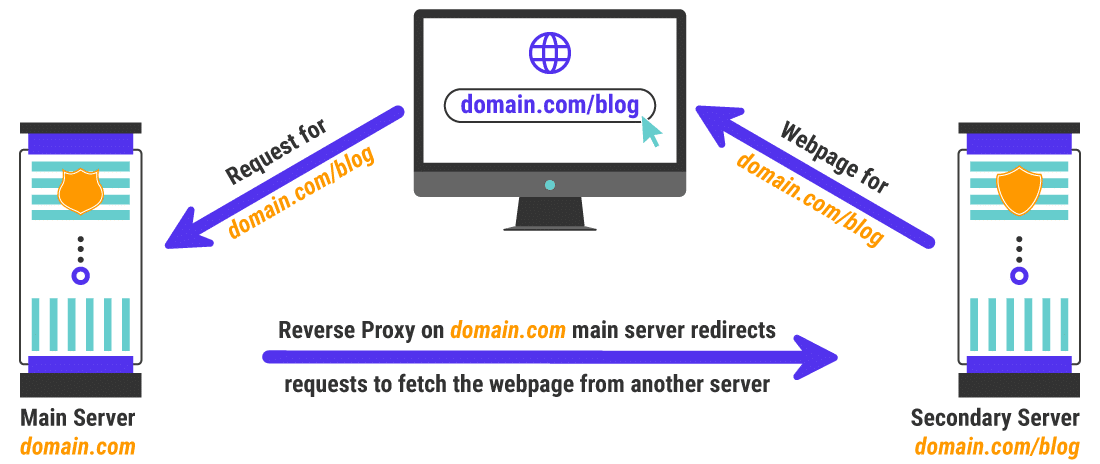

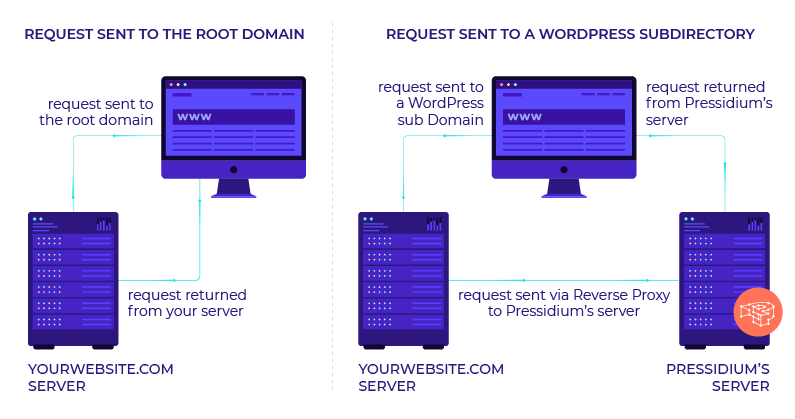

I have done this a lot and I mean a lot what you want to do is set up a reverse proxy on your subdomain and this will allow you to not only bypass having to use multisite for subfolder but if you want to power it separately you can you do not have to it all. You should probably use your same server and power through Fastly our CloudFlare

once you set this up it is super easy to keep it running in your entire site will be much faster as a result as well

my response to someone else that needed a subfolder

https://moz.com/community/q/topic/69528/using-a-reverse-proxy-and-301-redirect-to-appear-sub-domain-as-sub-directory-what-are-the-seo-risksplease also look at it explained by these hosting companies is unbelievable easy to implement compared to how it looks and you can do so with Fastly or cloudflare in a matter of minutes

-

https://servebolt.com/help/article/cloudflare-workers-reverse-proxy/

-

https://support.pagely.com/hc/en-us/articles/213148558-Reverse-Proxy-Setup

-

https://wpengine.com/support/using-a-reverse-proxy-with-wp-engine/

-

https://thoughtbot.com/blog/host-your-blog-under-blog-on-your-www-domain

-

https://crate.io/blog/fastly_traffic_spike

*https://support.fastly.com/hc/en-us/community/posts/4407427792397-Set-a-request-condition-to-redirect-URL -

https://coda.io/@matt-varughese/guide-how-to-reverse-proxy-with-cloudflare-workers

-

https://www.cloudflare.com/learning/cdn/glossary/reverse-proxy/

-

https://gist.github.com/LimeCuda/18b88f7ad9cdf1dccb01b4a6bbe398a6

I hope this was of help

tom

-

-

@nmiletic The content section of the site requires a unique UI Design and other robust functionality, so having a separate theme/plugins in its own directory is going to be the way we go here. Thanks for your assistance!

-

@himalayaninstitute Have you thought about adding a page and making all of this new content a subpage? Or changing your permalink structure to include a category in the URL? You can then add all of these posts under that category and have the URL show up as www.example.com/category/page-or-post-name

-

The website at the subdirectory will be an online learning platform with a blog, online courses, memberships, gated content, etc. The content currently lives on the main site, so, it's great that we can move it into the subdirectory without taking a hit from Google.

Since these are fundamentally two separate websites, we're not concerned about needing to manage them independently.

Thanks again for your input and advice, we greatly appreciate it!

-

@amitydigital said in WordPress Sub-directory for SEO:

Google will view it as one site so you shouldn't have any issues from that perspective. The Google bot is just looking at pages and won't know/care that the underlying CMS that is running some pages is a different install than other pages. The downside is you now have two websites to maintain, two themes, two sets of files, etc... That may result in a bit of a headache in the future.

As @amitydigital put it, the issue with your approach would be repetitive tasks. You will not loose any DA nor PA (being that you implement a correct 301 redirection). What is going to be on the subdirectory?

-

Google will view it as one site so you shouldn't have any issues from that perspective. The Google bot is just looking at pages and won't know/care that the underlying CMS that is running some pages is a different install than other pages. The downside is you now have two websites to maintain, two themes, two sets of files, etc... That may result in a bit of a headache in the future.

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Browse Questions

Explore more categories

-

Moz Tools

Chat with the community about the Moz tools.

-

SEO Tactics

Discuss the SEO process with fellow marketers

-

Community

Discuss industry events, jobs, and news!

-

Digital Marketing

Chat about tactics outside of SEO

-

Research & Trends

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

Related Questions

-

Unsolved Using Weglot on wordpress (errors)

Good day to you all, Does anyone have experience of the errors being pulled up by Moz about the utility of the weglot plugin on Wordpress? Moz is pulling up URLs such as: https://www.ibizacc.com/es/chapparal-2/?wg-choose-original=false These are classified under "redirect issues" and 99% of the pages are with the ?wg-choose parameter in the URL. Is this having an actual negative impact on my search or is it something more Moz related being highlighted. Any advice be appreciated and a resolution .. Im thinking I could exclude this parameter.

Moz Pro | | alwaysbeseen0 -

Unsolved Google URL inspection live test rendering issue.

Hi Everyone, This is my first post on Moz. I have been trying to get this thing sorted and have read everywhere and everyone just says don't worry about it. I would really like some advice/suggestions on this it will be really helpful. When I use the Google URL inspection tool from the Google search console the page rendering is completely broken. The tool refuses to load resources each time. At end of the day that's how the website is rendered in Google cache. I have already tried disabling cache plugins and Cloudflare but nothing works. site - nationalcarparts.co.nz

Support | | caitlinrolex789

This is how it renders when using URL inspection tool -

https://prnt.sc/7XKHtEU01gEl and if you check cache:https://nationalcarparts.co.nz this is how Google is caching it. Plugins I am using - Elementor Version 3.6.1, Elementor Pro Version 3.6.4, Exclusive Addons Elementor Version 2.5.4, Exclusive Addons Elementor Pro Version 1.4.6, WP- rocket, Cloudflare Pro plan with the plugin. Please if someone has fixed this issue and has a possible solution for it. Thanks cacheissue1.PNG1 -

Missing Meta Description

I am fairly new to using Moz and I have just recently ran a custom report, a "Full Site Audit". On this report it has pulled up a particular area that I have a question about, please see below. Missing Description

SEO Tactics | | NicheOff

In this area it says that there are 76 pages with missing descriptions. the majority of these pages are duplicated pages, of the Homepage, that were created to help put us first on Google when searching for Office/Furniture/IT suppliers in a certain city. (i.e. Halifax, Leeds, etc)

These additional pages were created by a third-party. In order to sort out these "Missing Descriptions", what would you advise we do when filling in the Meta Descriptions?0 -

Javascript and SEO

I've done a bit of reading and I'm having difficulty grasping it. Can someone explain it to me in simple language? What I've gotten so far: Javascript can block search engine bots from fully rendering your website. If bots are unable to render your website, it may not be able to see important content and discount these content from their index. To know if bots could render your site, check the following: Google Search Console Fetch and Render Turn off Javascript on your browser and see if there are any site elements shown or did some disappear Use an online tool Technical SEO Fetch and Render Screaming Frog's Rendered Page GTMetrix results: if it has a Defer parsing of Javascript as a recommendation, that means there are elements being blocked from rendering (???) Using our own site as an example, I ran our site through all the tests listed above. Results: Google Search Console: Rendered only the header image and text. Anything below wasn't rendered. The resources googlebot couldn't reach include Google Ad Services, Facebook, Twitter, Our Call Tracker and Sumo. All "Low" or blank severity. Turn off Javascript: Shows only the logo and navigation menu. Anything below didn't render/appear. Technical SEO Fetch and Render: Our page rendered fully on Googlebot and Googlebot Mobile. Screaming Frog: The Rendered Page tab is blank. It says 'No Data'. GTMetrix Results: Defer parsing of JavaScript was recommended. From all these results and across all the tools I used, how do I know what needs fixing? Some tests didn't render our site fully while some did. With varying results, I'm not sure where to from here.

Intermediate & Advanced SEO | | nhhernandez1 -

Directory Structuring - Im so confused what to do...

So there's no way I could type out my thoughts / questions. If you're bored, have a cup of coffee and some oreos, and are interested in a strange situation that I can't figure out the solution to, I'd appreciate your input: https://youtu.be/GomGOAdNens I should note ahead of time: It really is important to me to do things "by the book" and "to the letter" with SEO. So while there may be some gray areas, I would like to really do the one thing that is BEST in this situation. Thanks for any input.

Intermediate & Advanced SEO | | HLTalk1 -

How to be a good SEO optimizer while competing with a good ranked Bad SEO optimizer?

My keywords are very competitive. My on page optimization report gives A grade for all the keywords I want to target to my Root domain. But my root domain does not show up on search engines for those same keywords. So thanks to SEOmoz i have managed to understand the place I lack is good link building. My competitors have done lot of link building through spamming, commenting on blogs, directories etc. Now according to good seo, this is not right. What do i do? I get digging more in it, i realized that i am getting traffic mostly for less globally searched keywords. But my competitors get high traffic from well searched keywords. How do i cope with such competition? Thanks

Intermediate & Advanced SEO | | MiddleEastSeo0 -

Could Sub domains damage our SEO?

Hi there, We're currently looking into integrating a new internal search function to our site which will involve housing the search results on a sub domain of our site. We have no intention of these search result pages becoming landing pages for organic traffic but would the inclusion of a sub domain affect the optimization of the main domain? i.e. could it effect our authority? Nige

Intermediate & Advanced SEO | | NigelJ0